Most practices start looking at an AI receptionist after the same week from hell. Phones ring nonstop. Staff spend half the day repeating the same scheduling script. Missed calls pile up. Then a vendor demo makes it look easy. Connect the EMR, turn on the bot, and problems disappear.

That’s not how these projects work.

I’ve seen emr integration with ai receptionist projects go well, and I’ve seen them stall because nobody mapped the scheduling rules, nobody tested handoffs, or nobody asked what happens when the AI writes half a record and the other half fails. The software matters, but the operating model matters more. If your intake logic, escalation paths, and EMR permissions are messy now, the AI will expose that fast.

The good news is that this can work extremely well if you treat it like an operational rollout instead of a phone add-on.

Before you integrate, audit your practice's readiness

This is the step often rushed, and it’s the step that decides whether the rest of the project works. A practice usually doesn’t have an “AI receptionist problem.” It has a workflow problem, a data problem, or a staffing coverage problem that the phone system is making obvious.

Ask what really happens from first call to booked visit

Don’t start with vendor features. Start with your current call path.

Walk through a normal patient interaction from the first ring to the final chart update. Do it for a new patient, an established patient, a refill request, a billing question, and an urgent symptom call. Most practices find that the phone tree on paper and the workflow in real life are not the same thing.

Use questions like these:

- Who answers first: Is it a person, voicemail, call queue, answering service, or overflow line after hours?

- What data gets captured: Name, date of birth, insurance, symptoms, preferred location, referral source, visit reason?

- Where does that data land: In the EMR, in sticky notes, in email, in a task queue, or nowhere reliable?

- Who owns exceptions: Prior auth questions, reschedules, language needs, angry patients, urgent clinical concerns?

If your team can’t answer those cleanly, don’t integrate yet. Fix the workflow first.

Verify that your EMR can actually support the workflow you want

A vendor saying “we integrate with Epic” or “we support athenahealth” doesn’t tell you much. What matters is what the integration can read, what it can write, and how fast those updates appear.

Ask your EMR or practice management vendor for direct answers on:

| Question | Why it matters |

|---|---|

| Does the system allow bi-directional API access? | Read-only access won’t support live scheduling or note writeback. |

| Are scheduling rules exposed through the API? | Double-booking protection often breaks here. |

| Can the integration write structured fields, not just notes? | Free-text alone creates cleanup work later. |

| Are audit logs available for third-party writes? | You need to see what the AI changed and when. |

| How are failed writes handled? | Partial sync issues are common and often ignored in sales calls. |

FHIR has made exchange easier in many environments since broader adoption expanded after 2020, but older systems and custom builds still need more work. Some projects are straightforward. Others need custom mapping, cleanup rules, and real testing before anybody should touch live scheduling.

Practical rule: If the vendor can’t explain exactly which fields they read, which fields they write, and how they handle failures, you’re not looking at an implementation plan. You’re looking at a demo.

Set goals that operations can measure

“Improve efficiency” is too vague to run a project. You need targets your front desk manager and practice administrator can check every week.

The discovery and goal-setting phase matters because top systems can reach 85% to 95% call completion rates, and well-defined goals can target a 90% reduction in hold times or a 30% to 50% drop in no-shows via reminders, according to this implementation breakdown from Sunshine City Counseling.

Set a small number of operational goals, not a giant wish list. For example:

- Access goal: Raise answered-call coverage so fewer patients drop before speaking to someone.

- Scheduling goal: Reduce time from inbound call to booked appointment.

- Documentation goal: Cut manual re-entry from phone notes into the EMR.

- No-show goal: Use reminders and confirmation flows to reduce empty slots.

Readiness also includes security discipline. Before giving any new tool access to your patient communication stack, I like to review vendor access, identity controls, and external exposure. Teams that need a general security checkpoint before rollout can use something like GoSafe Dark Web monitoring for risk assessment to spot obvious account and exposure issues outside the EMR itself.

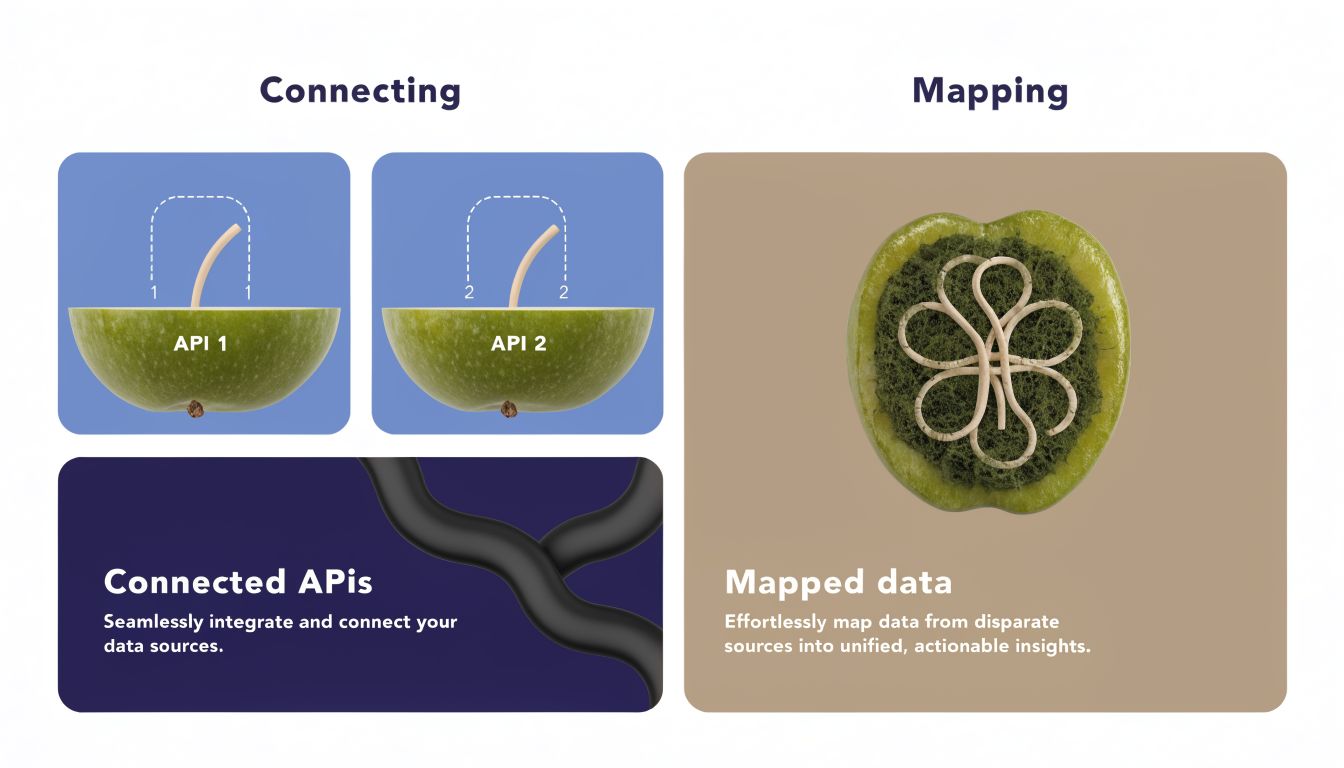

The core integration of connecting APIs and mapping data

The technical side gets described as if you plug two systems together and move on. In reality, the hard part isn’t the connection. It’s deciding exactly what data moves, when it moves, and which system wins when two people or two processes touch the same record.

Think of the API as a controlled doorway

An API is the doorway between the AI receptionist and the EMR. It lets the AI ask questions like “Is this patient already in the system?” or “Is Dr. Lee open at 3:30?” It also lets the AI write back things like appointment bookings, intake details, and call summaries if permissions allow it.

That doorway can be wide open, tightly limited, or strangely shaped. FHIR-based access usually gives you more standard behavior. Older custom APIs often need exceptions for field names, scheduling logic, location IDs, or insurance fields.

If you want a plain-English walkthrough of the integration layer itself, this guide on EMR system integration is a useful reference for how two-way connections are typically structured in practice.

Data mapping is where projects quietly succeed or fail

Here’s a simple example. A patient calls and says:

- She’s a new patient

- Her name and date of birth

- She wants dermatology

- She prefers the downtown location

- She has a rash that started two days ago

- She has commercial insurance

- She’s available this Thursday afternoon

That sounds simple until you map it.

Some of that belongs in demographic fields. Some belongs in scheduling metadata. Some belongs in a triage or intake note. Some may require a structured symptom field, while another EMR only accepts free text in a reason-for-visit box. If the AI dumps everything into one long note, your staff still have to clean it up.

Good mapping answers questions like:

- Which fields are required: Name, DOB, phone, provider, location, visit type, insurance carrier

- Which fields are optional: Referral source, secondary phone, preferred language

- Which values must match exact picklists: Provider names, appointment types, location codes

- What happens if a field is missing: Hold the booking, create a task, or transfer to staff

A lot of practices miss this point. They test whether the AI can book. They don’t test whether it books into the right appointment type, under the right provider, with the right instructions attached.

Real-time only matters if conflict handling works

Vendor language gets slippery regarding integration claims. Many vendors claim “real-time” integration, but practices need to ask what happens when an AI and a staff member try to book the same slot at the same time. True bi-directional sync has to reconcile those conflicts to avoid blind spots, especially with older, non-cloud EMRs, as noted by MedReception’s write-up on EMR integration failure modes.

A booking flow is not safe just because it looks fast. It’s safe when conflicts, retries, and partial failures are visible and recoverable.

I also tell teams to pay attention to the phone layer. If calls are forwarded to an offsite AI agent, caller identity needs to pass through cleanly so the AI can match records correctly. This explainer on Hosted Telecommunications' AI caller ID is a good example of the telephony detail that often gets ignored until matching fails.

Don’t ignore partial write failures

A common failure pattern looks like this:

| Event | What staff sees | Actual problem |

|---|---|---|

| Appointment created | Schedule looks correct | Intake note failed to write |

| Note written | Chart looks updated | Booking never finalized |

| Insurance captured | Demographics look fine | Carrier mapped to wrong field |

| Cancellation logged | Task appears in queue | Slot never reopened in schedule |

This is why I push for exception queues. If a write fails, the system should flag it for review, not leave staff guessing. “Real-time” without a recovery path creates hidden work, and hidden work is why teams lose faith in the system.

Designing the human-AI handoff and workflows

The best AI receptionist setups don’t try to automate every call. They sort calls quickly, handle the routine ones cleanly, and hand off the rest with enough context that staff don’t have to start over.

That handoff design is where a lot of the primary value sits.

What a good handoff sounds like

A patient calls to book an annual follow-up. The AI verifies identity, checks location preference, offers available times, books the visit, and logs the interaction. Nobody on staff touches it.

Another patient calls and says he has chest pressure, feels weak, and isn’t sure whether he should wait for tomorrow. That call should not bounce through a generic menu. The system should score urgency and route immediately to a clinical escalation path, based on the workflow your practice approved.

Modern AI receptionists can do more than route calls. They can use urgency scoring to decide when a human is needed and support escalations that cut front desk workload by up to 80%, according to VoxyHealth’s explanation of EMR-connected AI receptionists.

Where teams get the handoff wrong

The bad version is easy to spot. The AI collects a long story, transfers the call, and the staff member asks, “Can you tell me again why you’re calling?” That doesn’t save time. It irritates patients and makes staff think the tool is useless.

The better version is a warm transfer or a structured callback task with context attached. Staff should see the reason for the call, any urgency signals, the patient identity status, what the AI already attempted, and what still needs a human decision.

For many practices, this means building separate flows for:

- New patients: registration, insurance capture, provider matching

- Existing patients: reschedules, refill routing, result questions

- Administrative calls: billing, directions, fax status, referrals

- Clinical concern calls: symptom-based triage and staff escalation

- After-hours calls: urgent routing versus next-business-day task creation

The workflow has to match real front desk behavior

I like to review handoff rules with the people who answer phones, not just leadership. They know where scripts break. They know which insurance questions always spiral. They know which providers insist on specific scheduling logic.

If you’re evaluating live call handling models, this overview of a virtual medical receptionist gives a practical picture of where AI fits and where staff still need to step in.

Field note: If your staff say, “We’ll just fix it later,” that usually means the handoff design is weak. Cleanup work becomes permanent work.

One more point that gets missed. Decide what “handoff complete” means. Is it a live transfer? A message in the EMR? A task in the practice management queue? A callback assignment to a specific role? If you don’t define that up front, calls fall into a gray zone where everyone assumes someone else owns the follow-up.

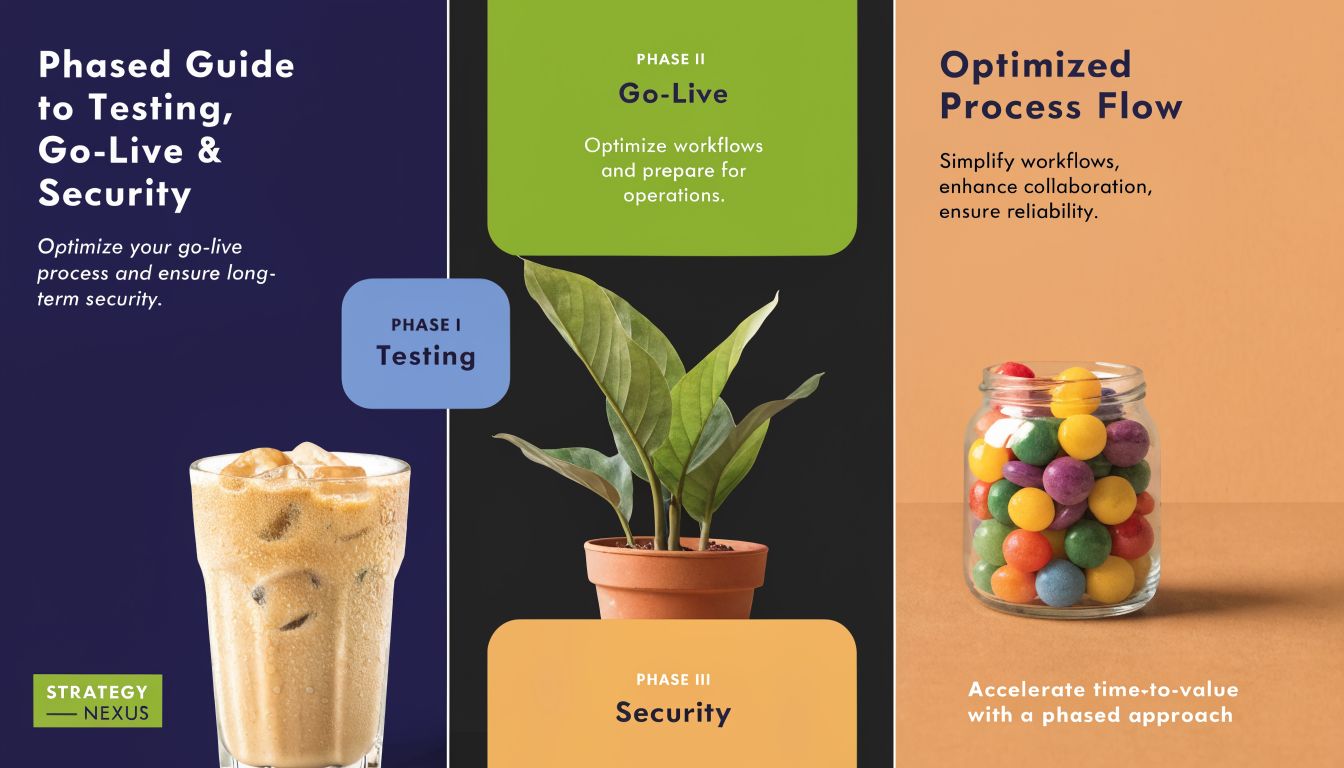

A phased guide to testing, go-live, and security

A rushed go-live is how practices end up blaming the AI for problems they never tested. Phone workflows touch access, scheduling, staff behavior, and patient trust, so you need a rollout plan that limits risk.

Pilot with a narrow slice of real work

Start with a contained pilot. Pick a location, one provider group, or a limited call type such as routine scheduling. Don’t try to move every inbound call on day one.

I usually want the pilot to answer a few hard questions:

- Can the AI identify patients correctly: Especially with duplicate charts, nicknames, or family callers

- Can it follow scheduling rules: New versus return patient, provider restrictions, visit-type limits

- Can staff review what happened: Call logs, booking actions, note writebacks, escalations

- Can the team recover from failure: Manual takeover, rollback, queue review, missed-write correction

Security starts here, not later. Confirm the BAA is signed. Review access permissions. Check how the system encrypts stored and transmitted data. Make sure your compliance lead or privacy officer can see audit logs for any chart or schedule action the AI takes.

Use user acceptance testing like operations, not theater

A lot of teams do UAT badly. They test the happy path once, everyone nods, and they call it done.

You need scenario-based testing that reflects actual patient behavior. With 80% to 85% of AI projects failing due to poor data or integration issues, rigorous testing matters. The same source notes that successful deployments can reach 88% patient satisfaction, outperforming humans when implementation avoids the common pilot failures, according to Sully AI’s implementation analysis.

A good UAT set includes cases like:

| Test scenario | What to verify |

|---|---|

| Existing patient reschedules | Correct chart match, slot update, cancellation rules |

| New patient booking | Demographics, insurance capture, appointment type |

| Urgent symptom mention | Immediate escalation path and clear staff alert |

| Caller asks for lab status | Proper retrieval limits and safe response |

| Mid-call disconnect | Retry logic, task creation, partial-write review |

“If your test script doesn’t include failure cases, you’re not testing the implementation. You’re rehearsing the demo.”

Prepare go-live like a controlled cutover

Go-live day should feel boring. That’s the goal.

Don’t switch every line at once if you can avoid it. Keep a fallback path active. Give staff a clear escalation tree for technical issues and a separate one for patient safety issues. Tell them who owns schedule corrections, who reviews failed syncs, and who monitors call transcripts or summaries for the first week.

For the first few days, review these items daily:

- Exception queue: Failed writes, duplicate bookings, unmatched patients

- Escalation queue: Clinical concerns, distressed callers, billing edge cases

- Audit activity: Who changed what in the EMR and whether the action was expected

- Staff feedback: Repeated cleanup tasks usually point to a mapping issue, not “user resistance”

Practices that get this right treat security and operational QA as the same conversation. If you can’t trace what the AI did in the chart, you don’t have enough control to trust the rollout.

Measuring success and proving your return on investment

If you only ask whether staff “like it,” you won’t know whether the project is working. You need a small KPI set that connects call handling to schedule utilization, labor, and patient access.

Practices using integrated AI receptionists have reported 30% to 50% fewer missed calls, 15% to 25% more appointment bookings, and 20% to 40% less staff overtime, according to Sully AI’s 2025 review of medical receptionist platforms. Those are useful benchmarks, but they only matter if your own baseline is clean.

Track the metrics that change operations

I’d watch these first:

- Missed or abandoned calls: Pull this from your phone system dashboard. It tells you whether access improved.

- Appointment booking rate: Compare inbound scheduling conversations to completed bookings in the EMR.

- Staff overtime tied to phones: Payroll and manager logs usually show this clearly.

- No-show rate by appointment type: Measure this in the EMR before and after reminder and confirmation changes.

- Escalation rate and cleanup workload: If too many calls or records still need manual rescue, the workflow needs tuning.

For teams building a more formal ROI model, this guide on how to evaluate investment profitability is a useful primer for organizing savings, costs, and payback assumptions.

Use a simple ROI formula

Keep the math plain:

| ROI input | What to include |

|---|---|

| Gains | Added booked visits, reduced missed calls, lower overtime, less manual admin work |

| Costs | Subscription fees, implementation fees, telephony changes, staff training, internal project time |

| Formula | (Total gains – total costs) / total costs |

This doesn’t need to become finance theater. The point is to compare monthly cost against measurable operational gains.

If you want a healthcare-specific KPI structure for voice automation, this page on measuring success for AI voice agents in healthcare gives a practical list of what to monitor after launch.

Don’t ignore the softer signals

Some wins won’t show up in the first spreadsheet. Staff stop dreading the phone queue. Patients get answers after hours. Managers spend less time plugging schedule gaps caused by missed calls and weak follow-up.

Those gains matter, but I still tell teams the same thing. If you can’t tie your emr integration with ai receptionist rollout to call handling, booking, labor, and follow-through, you don’t have proof yet. You have a good feeling.

Frequently asked questions about EMR and AI integration

The questions below usually come up after the demo, once a practice manager starts thinking about contracts, IT time, and what can go wrong in the first month.

| Question | Answer |

|---|---|

| How long does integration usually take? | It depends heavily on your EMR, telephony setup, and workflow complexity. Small practices with older systems or non-standard workflows need to be careful here. Public guidance from Confido notes hidden costs can include 3 to 6 months of setup and $15,000 to $50,000 in professional services for practices with older EMRs or unusual workflows, which can turn a low monthly price into a real capital project, as described in Confido’s buyer guide for healthcare AI receptionists. |

| Will the AI replace my front desk staff? | Usually no. In well-run deployments, the AI takes repetitive work off the desk and sends exception cases to people. The staff role changes from answering every call to managing escalations, edge cases, and patient situations that need judgment. |

| What’s the biggest technical risk? | Partial sync and conflict handling. If an appointment writes but the note fails, or if staff and AI touch the same slot at once, you need clear reconciliation rules and an exception queue. |

| Can a small practice do this without internal IT? | Yes, but only if the vendor’s implementation scope is explicit. Small teams should ask who owns field mapping, telephony setup, testing, training, and support during go-live. If those answers are vague, the project usually drifts back onto office staff. |

| What should I insist on in the contract? | Spell out EMR write capabilities, audit logging, security responsibilities, support response expectations, rollback options, and who fixes mapping issues found after launch. If it isn’t in writing, assume it’s not included. |

If your practice is sorting through vendors and trying to figure out what’s realistic, Simbie AI is one option built for healthcare voice workflows with EMR-connected call handling. The right next step isn’t a full rollout. It’s a disciplined discovery session where you map call flows, confirm write permissions, define handoffs, and test the hard cases before patients ever feel the switch.